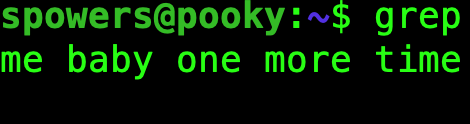

[NOTE: This is a piece I wrote for Linux Journal a few years back. It’s still very relevant, and still important information for anyone dabbling in crypto. This seems like a good time of year to repost it.]

One for you, one for me, and 0.15366BTC for Uncle Sam.

When people ask me about bitcoin, it’s usually because someone told them about my days as an early miner. I had thousands of bitcoin, and I sold them for around a dollar each. At the time, it was awesome, but looking back—well you can do the math. I’ve been mining and trading with cryptocurrency ever since it was invented, but it’s only over the past few years that I’ve been concerned about taxes.

In the beginning, no one knew how to handle the tax implications of bitcoin. In fact, that was one of the favorite aspects of the idea for most folks. It wasn’t “money”, so it couldn’t be taxed. We could start an entire societal revolution without government oversight! Those times have changed, and now the government (at least here in the US) very much does expect to get taxes on cryptocurrency gains. And you know what? It’s very, very complicated, and few tax professionals know how to handle it.

What Is Taxable?

Cryptocurrencies (bitcoin, litecoin, ethereum and any of the 10,000 other altcoins) are taxed based on the “gains” you make with them. (Often in this article I mention bitcoin specifically, but the rules are the same for all cryptocurrency.) Gains are considered income, and income is taxed. What sorts of things are considered gains? Tons. Here are a few examples:

- Mining.

- Selling bitcoin for cash.

- Trading one crypto coin for another on an exchange.

- Buying something directly with bitcoin.

The frustrating part about taxes and cryptocurrency is that every transaction must be calculated. See, with cash transactions, a dollar is always worth a dollar (according to the government, let’s not get into a discussion about fiat currency). But with cryptocurrency, at any given moment, the coin is worth a certain amount of dollars. Since we’re taxed on dollars, that variance must be tracked so we are sure to report how much “money” we had to spend.

It gets even more complicated, because we’re taxed on the same bitcoin over and over. It’s not “double dipping”, because the taxes are only on the gains and losses that occurred between transactions. It’s not unfair, but it’s insanely complex. Let’s look at the life of a bitcoin from the moment it’s mined. For simplicity’s sake, let’s say it took exactly one day to mine one bitcoin:

1) After 24 hours of mining, I receive 1BTC. The market price for bitcoin that day was $1,000 per BTC. It took me $100 worth of electricity that day to mine (yes, I need to track the electrical usage if I want to deduct it as a loss).

Taxable income for day 1: $900.

2) The next day, I trade the bitcoin for ethereum on an exchange. The cost of bitcoin on this day is $1,500. The cost of ethereum on this day is $150. Since the value of my 1 bitcoin has increased since I mined it, when I make the trade on the exchange, I have to claim the increase in price as income. I now own 10 ethereum, but because of the bitcoin value increase, I now have more income. There are no deductions for electricity, because I already had the bitcoin; I’m just paying the capital gains on the price increase.

Taxable income for day 2: $500.

3) The next day, the price of ethereum skyrockets to $300, and the price of bitcoin plummets to $1,000. I decide to trade my 10 ethereum for 3BTC. When I got my ethereum, they were worth $1,500, but when I just traded them for BTC, they were worth $3,000. So I made $1,500 worth of profit.

Taxable income for day 3: $1,500.

4) Finally, on the 4th day, even though the price is only $1,200, I decide to sell my bitcoin for cash. I have 3BTC, so I get $3,600 in cash. Looking back, when I got those 3BTC, they were worth $1,000 each, so that means I’ve made another $600 profit.

Taxable income for day 4: $600.

It might seem unfair to be taxed over and over on the same initial investment, but if you break down what’s happening, it’s clear we’re only getting taxed on price increases. If the price drops and then we sell, our taxable income is negative for that, and it’s a deduction. If you have to pay a lot in taxes on bitcoin, it means you’ve made a lot of money with bitcoin!

Exceptions?

There are a few exceptions to the rules—well, they’re not really exceptions, but more clarifications. Since we’re taxed only on gains, it’s important to think through the life of your bitcoin. For example:

- Employer paying in bitcoin: I work for a company that will pay me in bitcoin if I desire. Rather than a check going into my bank account, every two weeks a bitcoin deposit goes into my wallet. I need to track the initial cost of the bitcoin as I receive it, but usually employers will send you the “after taxes” amount. That means the bitcoin you receive already has been taxed. You still need to track what it’s worth on the day you receive it in order to determine gain/loss when you eventually spend it, but the initial total has most likely already been taxed. (Check with your employer to be sure though.)

- Moving bitcoin from one wallet to another: this is actually a tougher question and is something worth talking about with your tax professional. Let’s say you move your bitcoin from a BitPay wallet to your fancy new Trezor hardware wallet. Do you need to count the gains/losses since the time it was initially put into your BitPay wallet? Regardless of what you and your tax professional decide, you’re not going to “lose” either way. If you decide to report the gain/loss, your cost basis for that bitcoin changes to the current date and price. If you don’t count a gain/loss, you stick to the initial cost basis from the deposit into the BitPay wallet.

The moral of the story here is to find a tax professional comfortable with cryptocurrency.

Accounting Complications

If you’re a finance person, terms like FIFO and LIFO make perfect sense to you. (FIFO = First In First Out, and LIFO = Last In First Out.) Although it’s certainly easy to understand, it wasn’t something I’d considered before the world of bitcoin. Here’s an example of how they differ:

- Day 1: buy 1BTC for $100.

- Day 2: buy 1BTC for $500.

- Day 3: buy 1BTC for $1,000.

- Day 4: buy 1BTC for $10,000.

- Day 5: sell 1BTC for $12,000.

If I use FIFO to determine my gains and losses, when I sell the 1BTC on day 5, I have to claim a capital gain of $11,900. That’s considered taxable income. However, if I use LIFO to determine the gains and losses, when I sell the 1BTC on day 5, I have to claim only $2,000 worth of capital gains. The question is basically “which BTC am I selling?”

There are other accounting methods too, but FIFO and LIFO are the most common, and they should be okay to use with the IRS. Please note, however, that you can’t mix and match FIFO/LIFO. You need to pick one and stick with it. In fact, if you change the method from year to year, you need to change the method officially with the IRS, which is another task for your tax professional.

The Long and Short of It

Another complication when it comes to calculating taxes doesn’t have to do with gains or losses, but rather the types of gains and losses. Specifically, if you have an asset (such as bitcoin) for longer than a year before you sell it, it’s considered a long-term gain. That income is taxed at a lower rate than if you sell it within the first year of ownership. With bitcoin, it can be complicated if you move the currency from wallet to wallet. But if you can show you’ve had the bitcoin for more than a year, it’s very much worth the effort, because the long-term gain tax is significantly lower.

This was a big factor in my decision on whether to cash in ethereum or bitcoin for a large purchase I made this year. I had the bitcoin in a wallet, but it didn’t “age” as bitcoin for a full year. The ethereum had just been sitting in my Coinbase account for 13 months. I ended up saving significant money by selling the ethereum instead of a comparable amount of bitcoin, even though the capital gain amount might have been similar. The difference in long-term and short-term tax rates are significant enough that it’s worth waiting to sell if you can.

Overwhelmed?

If you’ve made only a couple transactions during the past year, it almost can be fun to figure out your gains/losses. If you’re like me, however, and you try to purchase things with bitcoin at every possible opportunity, it can become overwhelming fast. The first thing I want to stress is that it’s important to talk to someone who is familiar with cryptocurrency and taxes. This article wasn’t intended to prepare you for handling the tax forms yourself, but rather to show why you might need professional help!

Unfortunately, if you live in a remote rural area like I do, finding a tax professional who is familiar with bitcoin can be tough—or potentially impossible. The good news is that the IRS is handling cryptocurrency like any other capital gain/loss, so with the proper help, any good tax person should be able to get through it. FIFO, LIFO, cost basis and terms like those aren’t specific to bitcoin. The parts that are specific to bitcoin can be complicated, but there is an incredible resource online that will help.

If you head over to BitcoinTaxes (Figure 1), you’ll find an incredible website designed for bitcoin and crypto enthusiasts. I think there is a free offering for folks with just a handful of transactions, but for $29, I was able to use the site to track every single cryptocurrency transaction I made throughout the year. BitcoinTaxes has some incredible features:

- Automatically calculates rates based on historical market prices.

- Tracks gains/losses including long-term/short-term ramifications.

- Handles purchases made with bitcoin individually and determines gains/losses per transaction (Figure 2).

- Supports multiple accounting methods (FIFO/LIFO).

- Integrates with online exchanges/wallets to pull data.

- Creates tax forms.

The last bullet point is really awesome. The intricacies of bitcoin and taxes are complicated, but the BitcoinTaxes site can fill out the forms for you. Once you’re entered all your information, you can print the tax forms so you can deliver them to your tax professional. The process for determining what goes on the forms might be unfamiliar to many tax preparers, but the forms you get from BitcoinTaxes are standard IRS tax forms, which the tax pro will fully understand.

Figure 1. The BitcoinTaxes site makes calculating tax burdens far less burdensome.

Figure 2. If you do the math, you can see the price of bitcoin was drastically different for each transaction.

Do you need to pay $29 in order to calculate all your cryptocurrency tax information properly? Certainly not. But for me, the site saved me so many hours of labor that it was well worth it. Plus, while I’m a pretty smart guy, the BitcoinTaxes site was designed with the sole purpose of calculating tax information. It’s nice to have that expertise on hand.

My parting advice is please take taxes seriously—especially this year. The IRS has been working hard to get information from companies like Coinbase regarding taxpayer’s gains/losses. In fact, Coinbase was required to give the IRS financial records on 14,355 of its users. Granted, those accounts are only people who have more than $20,000 worth of transactions, but it’s just the first step. Reporting things properly now will make life far less stressful down the road. And remember, if you have a ton of taxes to pay for your cryptocurrency, that means you made even more money in profit. It doesn’t make paying the IRS any more fun, but it helps make the sore spot in your wallet hurt a little less.